Scaling LoRA to 1M Users

How a startup deployed multiple LoRA adaptations to serve millions of users with 99.9% uptime.

Read Case Study →Production-ready deployment strategies for parameter-efficient AI models

Learn Deployment

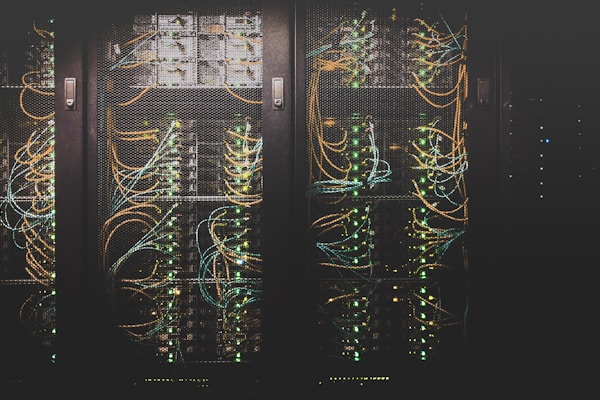

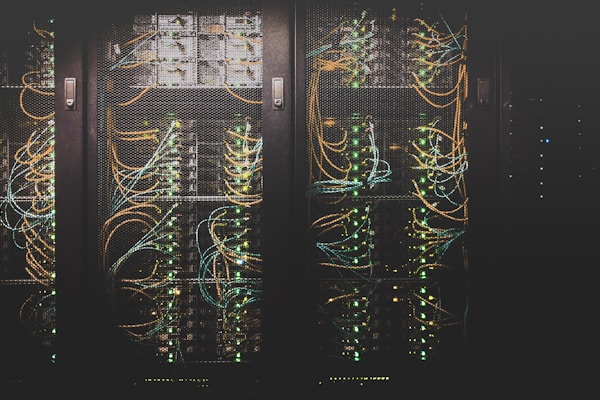

LoRA Delivery specializes in helping organizations deploy LoRA-adapted models to production environments efficiently and reliably. From local inference to cloud-scale serving, we provide comprehensive guidance on building robust MLOps pipelines for parameter-efficient AI.

Deploying LoRA models presents unique opportunities and challenges. The small size of LoRA checkpoints enables rapid model switching and efficient multi-tenant serving, but realizing these benefits requires careful architectural decisions and operational best practices. Our platform provides the knowledge and tools you need to succeed.

Deploy LoRA models on AWS, GCP, and Azure with optimized inference pipelines, auto-scaling, and cost-effective serving architectures.

Implement robust version control for LoRA adaptations, enabling A/B testing, gradual rollouts, and instant rollback capabilities.

Optimize inference latency and throughput with quantization, batch processing, and hardware acceleration techniques.

Track model performance, latency metrics, and resource utilization with comprehensive monitoring solutions.

Implement secure model serving with encryption, access control, and compliance with data protection regulations.

Reduce infrastructure costs with efficient resource allocation, serverless options, and smart scaling strategies.

How a startup deployed multiple LoRA adaptations to serve millions of users with 99.9% uptime.

Read Case Study →

Reduce serving costs by 80% with optimized LoRA deployment architectures and resource management.

Learn More →

Implement dynamic LoRA adapter loading for zero-downtime model updates and personalization.

Explore →Condensed sprints that move teams from prototype to production with a monetization scorecard and localized rollout plans covering EN, DE, IT, FR, and ES audiences.

Explore Service Packages →Reusable runbooks for observability, incident response, and adapter refresh cycles that protect latency budgets while meeting AdSense policy expectations.

Download Playbooks →Editorial workflows, media sourcing guides, and compliance checklists that convert AI expertise into high-value pages ready for premium advertisers.

Read Editorial Guides →The AI Coffee Break session demystifies low-rank decomposition, compares adapter families, and highlights production case studies. We use it as a primer during onboarding workshops so engineers, product leads, and content strategists share a common vocabulary.

After viewing, our team facilitates a roadmap clinic translating the lessons into action items across architecture, analytics, and monetization.

Passing Google AdSense review requires authentic value, trustworthy navigation, and clear policies. We operationalize these expectations across every language variant.